Why AI Gets It Wrong: The Real Reasons Behind AI Hallucinations and Misinformation

You asked an intelligence chatbot a simple question. It answered with confidence. Then you found out it was completely wrong.

You are not alone. Millions of people experience this every day.. It is one of the most talked-about problems in technology right now. Artificial intelligence models can sound like they know what they are talking about they can even cite sources. Still produce information that is factually incorrect, outdated or entirely made up.

This is not a mistake. It is a characteristic of how these systems work.

Understanding why artificial intelligence gets it wrong does not just make you a smarter artificial intelligence user. It helps you avoid mistakes verify outputs more effectively and use these tools where they genuinely shine.

Lets break it down.

What Does "Incorrect Artificial Intelligence Output" Actually Mean?

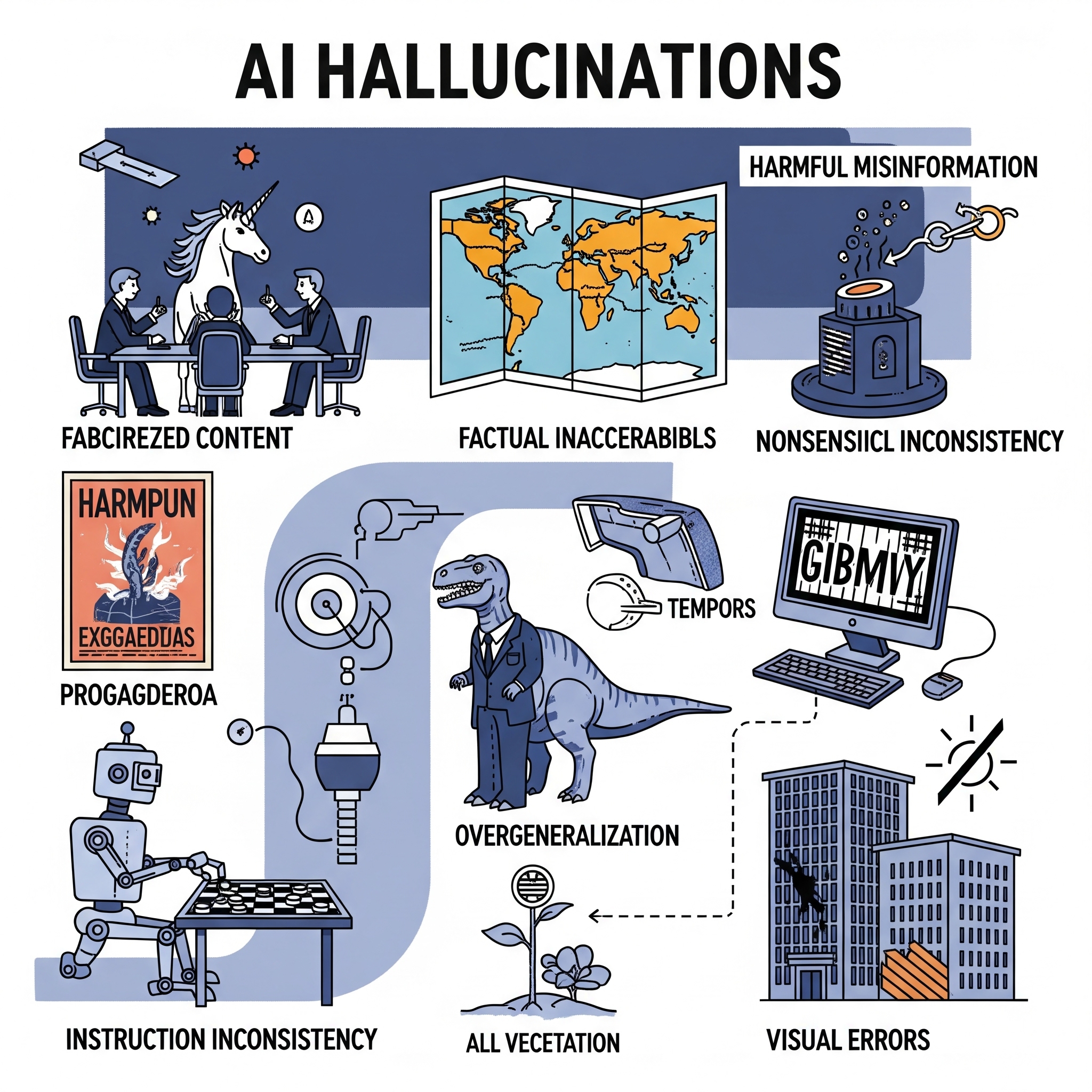

Before diving into causes it is worth distinguishing between the types of errors artificial intelligence models produce:

Hallucinations. Made-up facts, citations or invented statistics delivered with complete confidence

Outdated information. Accurate at some point in the past but no longer correct

Context misunderstanding. The artificial intelligence answered a question than you intended to ask

Bias amplification. Skewed outputs reflecting imbalances in the training data

Logical errors. Reasoning chains that lead to incorrect conclusions

Each type has a different root cause. And knowing which one you are dealing with changes how you respond to it.

1. Training Data Limitations: Bad Data In, Bad Data Out

Artificial intelligence language models learn everything they know from the data they were trained on. That data. Scraped from websites, books, academic papers, forums and more. Is enormous but imperfect.

The core problem: Real-world data contains errors, contradictions, outdated facts and outright misinformation. When an artificial intelligence trains on billions of examples it does not learn "the truth”. It learns patterns in how information is expressed. If enough sources say something the model learns to repeat it.

What this looks like in practice:

A medical question might return advice that was outdated by five years

A historical query might blend two events into one narrative

A legal question might produce guidance that applies to the wrong jurisdiction

How to protect yourself:

Cross-reference artificial intelligence outputs with primary sources especially for health, finance or legal matters. Treat artificial intelligence responses the way you would treat a first-draft Wikipedia article: starting point, not final authority.

2. Knowledge Cutoffs: Artificial Intelligence Lives in the Past

Every major artificial intelligence model has a training cutoff. A point in time after which it has no knowledge of world events. GPT-4, Claude, Gemini. All of them were trained on data that stops at a date.

This creates a failure mode: Artificial intelligence models will confidently answer questions about recent events using outdated information often without flagging that the information might be stale.

Common knowledge cutoff failures:

Recommending software versions that're now obsolete

Providing prices, statistics or regulatory information that has since changed

Describing company leadership government policies or market conditions that no longer apply

Missing entirely new research that contradicts older findings

The dangerous nuance:

Artificial intelligence models do not always know they do not know something recent. They fill in the gaps with sounding information rather than saying "I do not have current data on this."

How to protect yourself:

Always ask the intelligence what its knowledge cutoff is before relying on time-sensitive information. For anything with a "component. Current prices, recent events, active regulations. Verify with live sources.

3. Hallucinations: When Artificial Intelligence Makes Things Up

This is the phenomenon that gets the most press. And for good reason. Artificial intelligence hallucinations are instances where the model generates information that has no basis in reality with remarkable confidence.

Famous examples include:

Lawyers using intelligence-generated briefs that cited cases that never existed

Artificial intelligence "quoting" books or studies that were entirely fabricated

Chatbots inventing biographical details about real people

Why does this happen?

Large language models do not retrieve information the way a search engine does. They generate text by predicting what word or phrase is most likely to come based on patterns in training data. When a model does not have an answer it does not say "I do not know”. It generates what sounds most likely.

This is sometimes called " parroting”. The model produces statistically plausible text, not necessarily factual text.

The obscure or specific a question, the higher the hallucination risk. Ask an intelligence about Shakespeare's major plays and it will mostly get it right. Ask it about a niche paper or a local business's founding date and the risk of fabrication rises sharply.

How to protect yourself:

Be especially skeptical of numbers, citations, quotes and dates

Ask the artificial intelligence to explain its reasoning or source. Then independently verify.

Use artificial intelligence tools that cite real-time sources.

4. Poorly Framed Prompts

Sometimes the artificial intelligence is not wrong. It answered a different question than you meant to ask.

Natural language is inherently ambiguous. When you ask an intelligence a question it interprets that question based on the most statistically likely interpretation. If your phrasing is vague technical jargon is mixed with language or context is missing the artificial intelligence fills in the gaps on its own. Often incorrectly.

Example:

Asking "What's the best way to handle Python exceptions?" could return answers about programming or. In a defined context. About managing literal pythons in a wildlife facility.

Realistically vague business questions, ambiguous pronoun references or questions that do not specify time frame, geography or context all lead to artificial intelligence outputs that are technically an answer. Just not to the question you needed answered.

How to protect yourself:

Provide context in your prompts

Specify geography, time frame, industry and audience when relevant

Use iterative prompting: ask the artificial intelligence to confirm its understanding before giving you the full answer

Give the artificial intelligence a "role”. Telling it to act as a financial analyst or medical professional helps calibrate the response

5. Bias in Training Data

Artificial intelligence models do not just absorb facts. They absorb the political and demographic biases embedded in their training data. The internet for all its breadth over-represents languages, geographies, demographics and viewpoints.

What this means in practice:

Artificial intelligence outputs may reflect English-language perspectives as default "neutral"

Underrepresented communities, languages and regions get less accurate results

Historical biases in sourcing get baked into the model

Sentiment and opinion in training data can skew how an artificial intelligence frames responses on contested topics

This is especially relevant for anyone using artificial intelligence to produce content about diverse global audiences conduct market research outside major Western markets or research topics with cultural nuance.

How to protect yourself:

Recognize that artificial intelligence outputs are not culturally neutral. Engage subject-matter experts for any content where cultural accuracy matters. Ask the intelligence explicitly to consider non-Western or underrepresented perspectives.

6. Underfitting: Much or Too Little Generalization

These are technical terms that have real-world consequences.

Overfitting happens when an artificial intelligence model learns its training data too specifically. Essentially memorizing patterns than understanding them. This can cause it to apply particular logic to situations where it does not fit.

Underfitting happens when the model is too generalized. It lacks the understanding needed to give accurate answers in specialized domains.

Both create outputs just in different ways:

An overfitted model might apply a business principle from one industry incorrectly to another

An underfitted model might give generic surface-level answers to complex technical questions

This is why artificial intelligence tools purpose-built for specific domains tend to outperform general-purpose chatbots for specialized tasks.

7. Reasoning Errors: When Logic Goes Wrong

Artificial intelligence models can make errors in multi-step reasoning tasks. Those involving math, formal logic, causal inference or long chains of "if/then" thinking.

This is a documented limitation. While artificial intelligence models have improved dramatically at reasoning they can still fail on problems that require:

Keeping track of multiple variables across long reasoning chains

Understanding cause and effect

Recognizing when a question contains false premises

Applying common sense outside of typical language patterns

Real-world implication:

Be cautious when using artificial intelligence for complex financial modeling technical troubleshooting with many interdependent variables or any decision where a step-, by-step logical chain matters.

8. Prompt Injection and Adversarial Inputs

This is a less commonly discussed but increasingly relevant failure mode, especially for AI systems embedded in products and workflows.

Prompt injection occurs when malicious or misleading input in a user's query or in content the AI is asked to process, overrides or manipulates the AI's behavior.

At a simpler level, users can accidentally "confuse" AI models by providing contradictory information in a prompt, causing the model to weigh incorrect assumptions heavily in its response.

This is why AI-generated summaries of external documents can sometimes reflect the document's errors or biases rather than correcting them, the model processes the content as input, not as something to evaluate critically.

9. Lack of True Understanding

Here's the deepest cause of all: AI language models don't understand anything.

They are extraordinarily sophisticated pattern-matching systems. They learn to predict language, not to understand reality. There is no internal model of the world, no lived experience, no common sense the way humans have it.

The difference between Artificial Intelligence errors and human errors is important. When a human expert makes a mistake they usually have a feeling that something's not right. Artificial Intelligence does not have this check. It generates text that's likely to be correct based on statistics but it does so with the same confidence whether it is right or completely wrong.

This is why researchers are working on connecting Artificial Intelligence outputs to world data that can be verified. Artificial Intelligence systems that have access to the internet in time are also important for reducing certain types of errors.

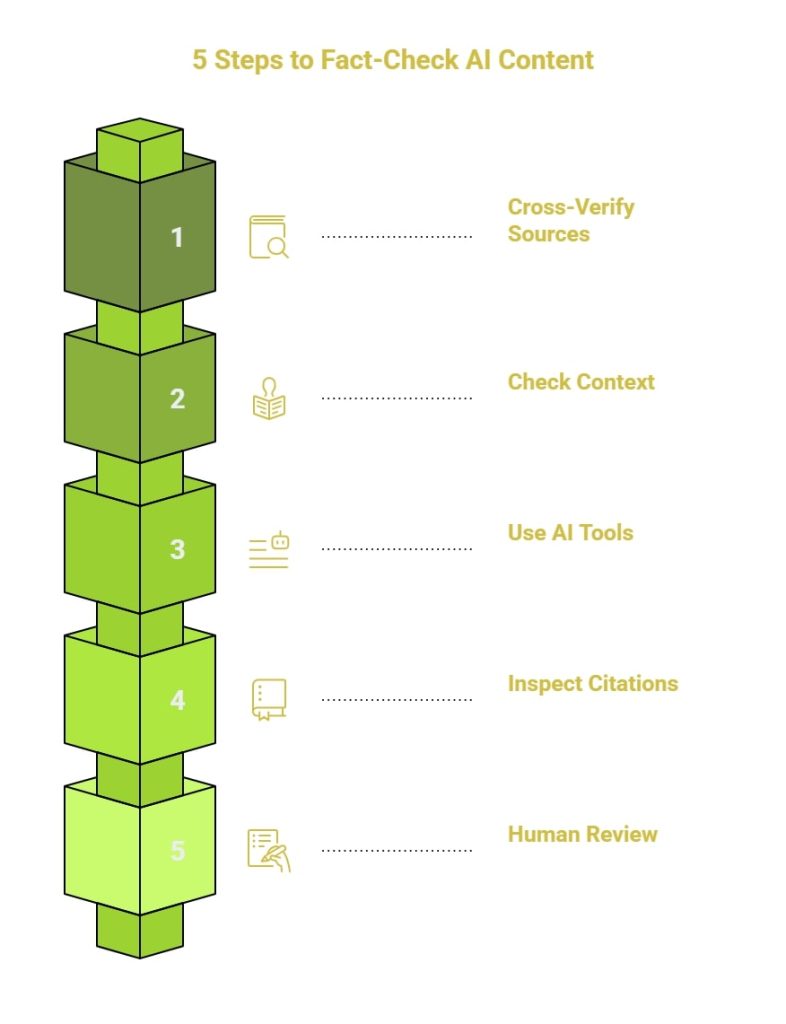

How to Use Artificial Intelligence Safely: A Practical Checklist

Considering all of this here is how you can minimize your risk when working with Artificial Intelligence generated information:

✅ Verify information before you publish or act on it. Think of Artificial Intelligence output as a draft, not the final answer.

✅ Use tools that are specific to an area. For legal or financial questions use Artificial Intelligence tools that are trained in those areas or consult with human experts.

✅ Ask when the models training data was last updated. For time information confirm when the training data ends.

✅ Be clear in your requests. Provide context, constraints, location, time frame and audience.

✅ Check the reasoning behind the answer, not the answer itself. Ask the Artificial Intelligence to show its work then evaluate each step.

✅ Verify information, with sources. Use the Artificial Intelligence to identify what to look for then check with sources.

✅ Do not assume that confidence means accuracy. Artificial Intelligence systems can be just as confident when they are wrong as when they are right.

✅ Use search tools that are assisted by Artificial Intelligence. Platforms that connect Artificial Intelligence to time web sources reduce the risk of outdated information.

Artificial Intelligence models are tools but they are not perfect. They are trained on data and predict what text should come next rather than providing verified facts.

Understanding why Artificial Intelligence makes mistakes does not mean you should stop using it. It means you should use it with an understanding of its limitations. Know when it can fail. Verify the outputs. Use the tool for the task.

The effective Artificial Intelligence users are not the ones who trust it the most. They are the ones who understand its limitations enough to know exactly when to trust it and when to check the Artificial Intelligence output.

At Engage AI our specialty is cutting through the noise. Helping businesses like yours put AI to work in ways that deliver real measurable results. Learn more about our services and book a consultation today.